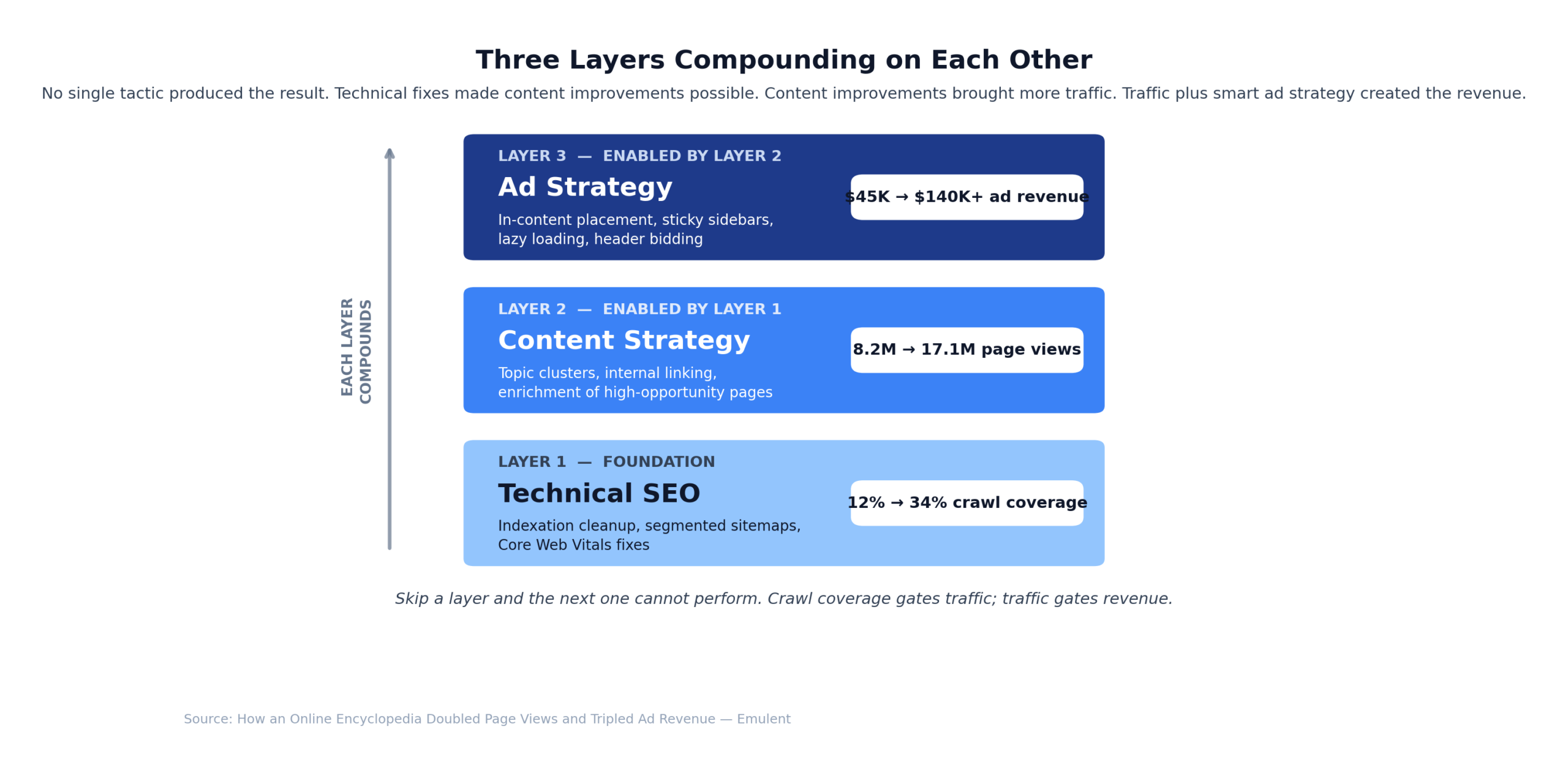

Author: Bill Ross | Reading Time: 5 minutes | Published: May 4, 2026 | Updated: April 29, 2026 A well-known online encyclopedia with over four million pages reached out with a problem we see often: a huge content library, but traffic and ad revenue stuck in neutral. Despite years of credibility, most of their pages barely registered with Google. We built a practical strategy focused on technical SEO, content quality, and smarter ad placement. Fourteen months later, monthly page views had doubled, and ad revenue had tripled. It’s easy to think that more pages should mean more traffic. In reality, for sites this size, the opposite is usually true. When Google lands on a site with millions of URLs, it has to pick and choose which URLs to crawl and index. That’s crawl budget in action, and it was the first real roadblock we found. Google’s crawlers were spending their time on thin, duplicate, or outdated pages, while the best content sat buried. The result: visitors averaged just over one page per session, bounce rates topped 70%, and ad revenue flatlined because most users left before seeing any ads.

“When a site has millions of pages, the biggest SEO challenge isn’t creating more content. It’s making sure Google can actually find and prioritize the content that matters. Crawl budget becomes the gatekeeper to everything else.” – Strategy Team, Emulent Marketing.

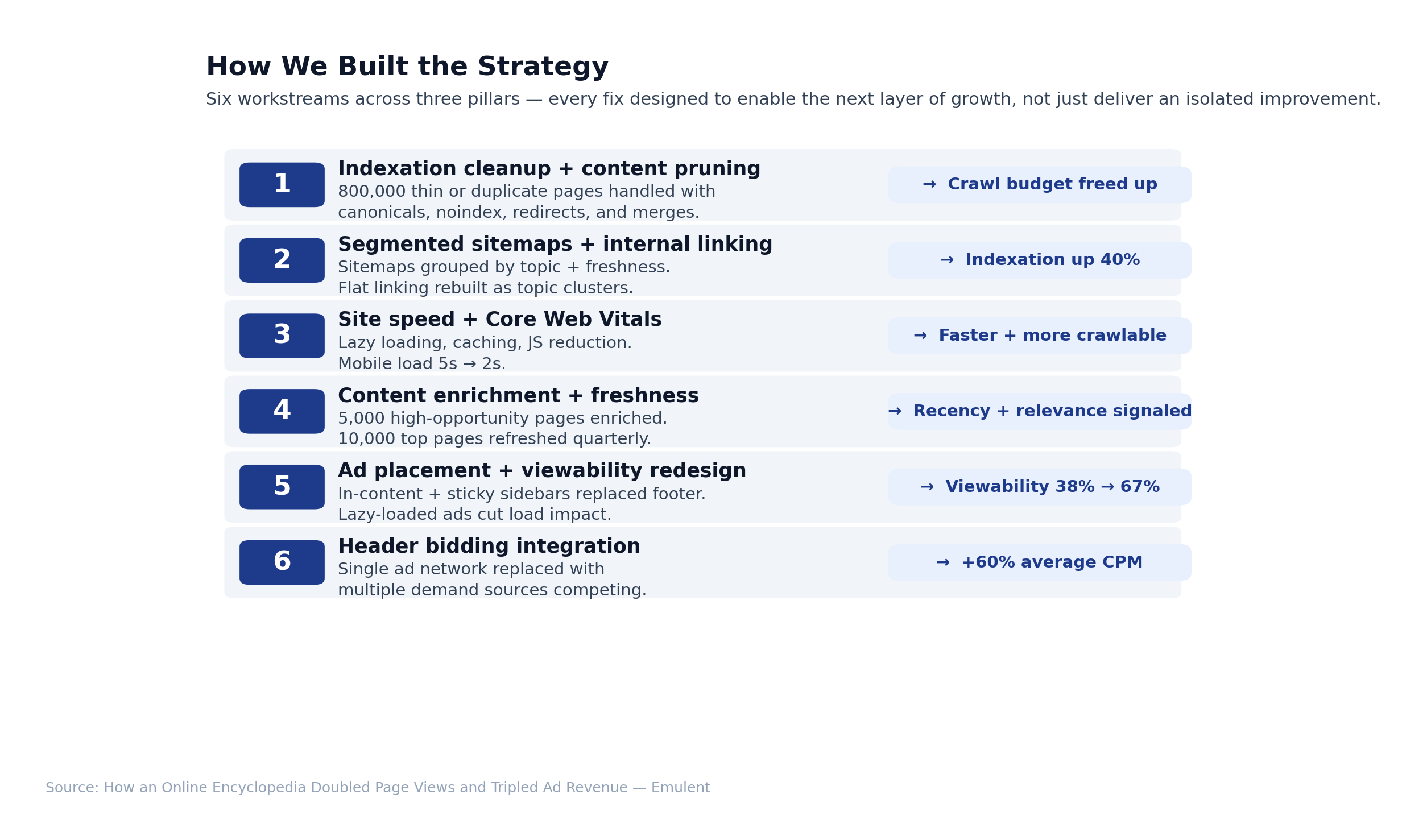

We started with a technical audit to see how Google’s crawlers actually moved through the site. The numbers told the story: only about 12% of pages were getting crawled each month. Most of Google’s attention was wasted on redirect chains, duplicate URLs, and pages with barely any unique content. Indexation management and content pruning: We uncovered 800,000 pages that were either duplicates or too thin to add value. Rather than just deleting them, we used canonical tags to consolidate, set noindex on weak entries, merged similar topics, and redirected outdated URLs. This took three months of steady work and daily checks to make sure we didn’t lose anything valuable along the way. XML sitemap restructuring: The original sitemap dumped all URLs into a single giant file. We switched to segmented sitemaps, grouping pages by topic and how often they were updated. High-priority areas like science, history, and geography have their own sitemaps, refreshed weekly. Older or less important content was included in separate sitemaps with slower update cycles. This made it clear to Google which parts of the site should get the most attention. Site speed and Core Web Vitals: We tackled slow page templates by adding lazy-loading for images, tightening caching, and reducing JavaScript. Mobile load times dropped from nearly five seconds to just over two, and layout shifts became a non-issue.

“Technical SEO for a site this large isn’t a one-time project. It’s an ongoing discipline. Every time you fix one crawl issue, you uncover two more. The teams that win are the ones who build systems for continuous monitoring rather than treating audits as a checkbox.” – Strategy Team, Emulent Marketing.

Fixing the technical issues opened the door, but it was the content strategy that actually moved the needle. We focused on three methods that worked together to drive results. Internal linking architecture: The original linking was flat and disconnected. Most entries didn’t point to related topics, and category pages were just long lists. We rebuilt the structure around topic clusters, with broad category pages linking to subcategories, and those linking down to individual entries. Each entry is also linked to related terms in the same cluster. This web of connections made it easier for both users and search engines to navigate the site. Six months in, Google was indexing 40% more pages, and users were viewing twice as many pages per session. Content enrichment for high-opportunity pages: We used Search Console and Ahrefs to identify about 5,000 pages that were just outside the top search results. We expanded these pages to answer more related questions, added structured data, included better images, and rewrote meta titles and descriptions to boost clicks. Within four months, over a third of these pages broke into the top five positions. Content freshness: Every quarter, we reviewed the top 10,000 pages, adding new information, links, and updates to keep them current and readable. Adding “Last Updated” timestamps signaled to Google that these pages were being actively maintained. Doubling page views was a good start, but tripling ad revenue took more than just extra traffic. We worked closely with the client’s ad ops team to rethink how ads were placed, loaded, and measured across the site. Ad viewability: The old setup put a big banner at the top, a sidebar ad, and a footer ad. The footer ad was barely seen—with less than 15% viewability—because most users never scrolled that far. The top banner loaded before the content, so people skipped right past it. We tested a new layout: swapped the footer ad for an in-content ad after the second or third paragraph, and added sticky sidebar ads that stayed visible as users scrolled. Viewability jumped from 38% to 67%, and CPMs improved because advertisers pay more when their ads are actually seen. Lazy loading ads: We set ads to load only when they come into view, which reduces initial page load times and prevents ads from slowing down the content. This fit right in with our Core Web Vitals improvements and kept layout shifts to a minimum.

“Ad revenue growth doesn’t come from cramming more ads onto a page. It comes from putting the right ads in positions where users will actually see them, while making sure those ads don’t slow down the experience. When viewability goes up, CPMs follow.” – Strategy Team, Emulent Marketing.

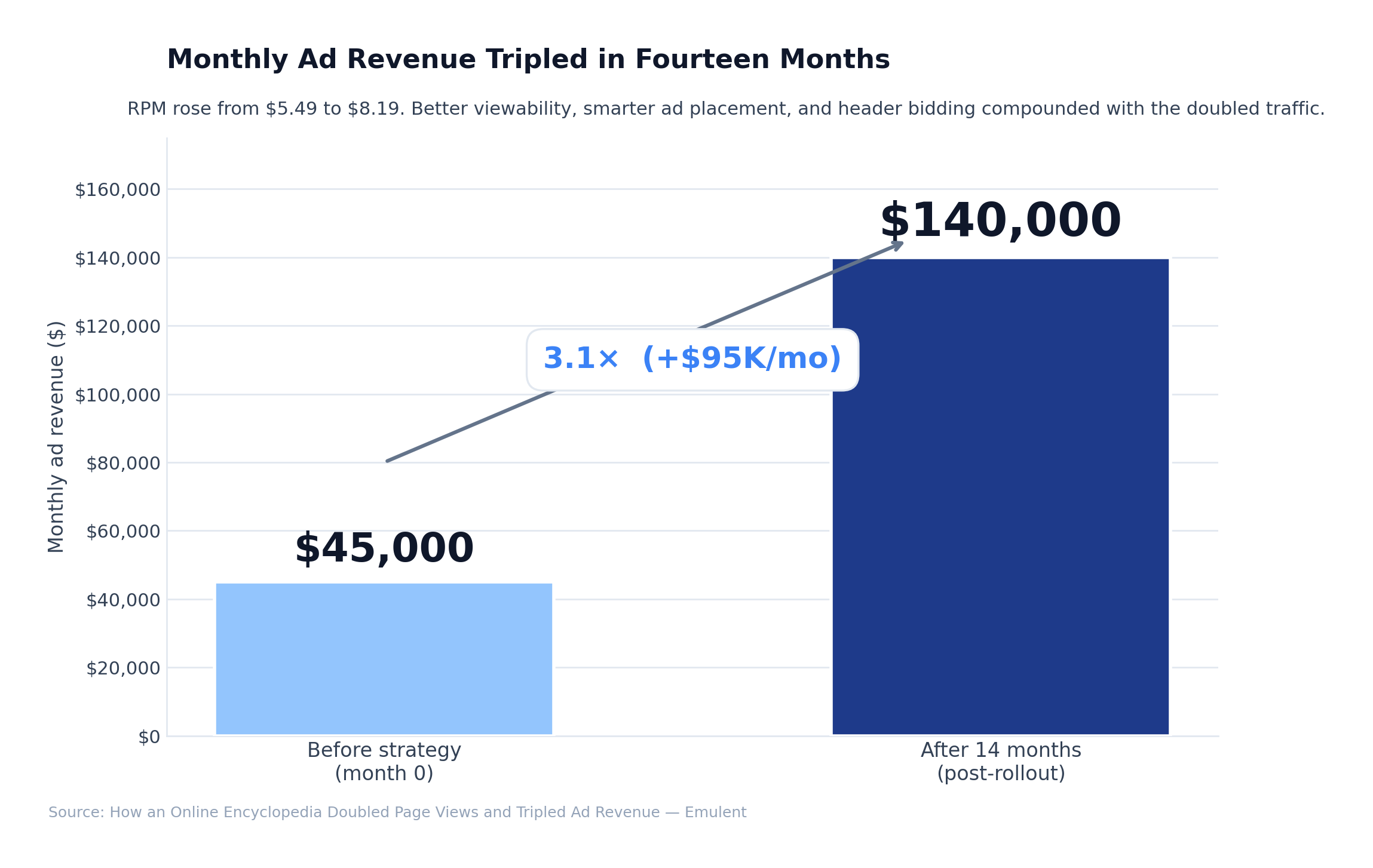

Header bidding: The client was using just one ad network, which limited competition for their ad space. We set up header bidding with multiple demand sources, so every impression went to the highest bidder. This pushed average CPM up by more than 60%. Combined with improved visibility and increased traffic, this was the main reason ad revenue tripled. Traffic and engagement: Monthly page views jumped from 8.2 million to 17.1 million. Visitors started exploring twice as many pages per session, and bounce rate dropped from 71% to 52%. Organic search traffic more than doubled, especially on long-tail queries where our improved content now ranked at the top. Revenue: Monthly ad revenue climbed from $45,000 to over $140,000. RPM rose from $5.49 to $8.19, thanks to better ad viewability and more competition from header bidding. The growth came from three factors working together: more page views, higher viewability, and stronger bidding per impression. Indexation and crawl health: The share of pages crawled each month jumped from 12% to 34%. Removing 800,000 low-value pages freed up crawl budget for the content that mattered. New pages started being indexed in 2-4 days instead of weeks.

“The most rewarding part of this project was watching each layer of the strategy compound on the next. Technical fixes made content improvements possible. Content improvements brought more traffic. More traffic, combined with smarter ad placement, created the revenue growth. No single tactic produced the result alone.” – Strategy Team, Emulent Marketing.

This project reinforced a few principles that hold true for any large content site, whether you’re running an encyclopedia, a news outlet, or a big e-commerce catalog. Second, internal linking shapes both how much of your content gets indexed and how much users actually explore. Moving from a flat structure to topic clusters made a measurable difference here. Third, ad revenue growth is as much about user experience as it is about monetization. Smarter ad placement, faster load times, and header bidding did more for revenue than just adding more pages. At Emulent, we work with content-heavy sites facing the same challenges: high page counts, flat traffic, and ad revenue that doesn’t reflect the work being put in. We bring hands-on technical SEO, content strategy, and a clear process to identify what’s holding your site back and fix it. If you want to talk about how to move your content site forward, reach out to the Emulent team. How We Helped a 4 Million Page Encyclopedia Website 2x Page Views and 3x Ad Revenue

Why Does a Website With Millions of Pages Still Struggle to Get Traffic?

Why Does a Website With Millions of Pages Still Struggle to Get Traffic?How Did We Fix Crawl Efficiency Across Four Million Pages?

With the audit in hand, our first move was to clean up the technical foundation so Google would spend its time on the pages that actually matter. We broke this into three priorities that worked together.

With the audit in hand, our first move was to clean up the technical foundation so Google would spend its time on the pages that actually matter. We broke this into three priorities that worked together.What Content Changes Actually Increased Page Views?

How Did We Triple Ad Revenue Without Hurting User Experience?

What Results Did the Strategy Produce Over 14 Months?

All three areas—technical SEO, content upgrades, and smarter ad strategy—worked together to move every key metric in the right direction.

All three areas—technical SEO, content upgrades, and smarter ad strategy—worked together to move every key metric in the right direction.What Can Large Content Websites Learn From This Project?

First, managing crawl budget is non-negotiable for sites with hundreds of thousands or millions of pages. If search engines can’t find and prioritize your best content, no amount of keyword research or new articles will fix it. Segmented sitemaps, canonical tags, and regular pruning are the basics.

First, managing crawl budget is non-negotiable for sites with hundreds of thousands or millions of pages. If search engines can’t find and prioritize your best content, no amount of keyword research or new articles will fix it. Segmented sitemaps, canonical tags, and regular pruning are the basics.How the Emulent Marketing Team Can Help

- Our Story

- What We Do

Website Optimization

What's Your Situation

- What We’ve Done

- Resource Center

- Let’s Talk!